Sense-making can take place in spaces and have particular rhythms. Spaces are the formal and informal meetings and events that make up the everyday life of organisations and programmes. Rhythms are the patterns and structures in time through which an organisation can direct, mobilise and regulate its efforts.

Examples include annual reports, monthly team meetings, quarterly board meetings, end-of-project reports, independent evaluations, field trips, stakeholder consultations, phone calls with partners, weekly teleconferences, email discussions with peers and impromptu conversations. Each of these has different purposes (therefore different information needs); different rhythms and timing (therefore different levels of detail required); and different people involved (therefore different perspectives to draw on).

Sense-making can operate at the macro or micro level. The macro level relates to questions about strategy and the external context, looking at broad patterns and knowledge that can be applied elsewhere. At the micro level, the questions are about this particular intervention and these particular actors, and how to improve what is being done.

Different spaces will have a different balance of micro and macro. Our monitoring system should make room for both kinds of sense-making in the appropriate spaces and maintain the balance between looking immediately ahead and looking to the horizon.

Designing monitoring for sense-making

Sense-making can operate at the macro or micro level. The macro level relates to questions about strategy and the external context, looking at broad patterns and knowledge that can be applied elsewhere. At the micro level, the questions are about this particular intervention and these particular actors, and how to improve what is being done.

Different spaces will have a different balance of micro and macro. Our monitoring system should make room for both kinds of sense-making in the appropriate spaces and maintain the balance between looking immediately ahead and looking to the horizon.

It is important that sense-making is not constrained dogmatically to any framework, as this could mean important unanticipated changes are missed.

Actions can have three broad effects:

- expected and predictable (e.g. you invite someone to a meeting and they turn up);

- expected and unpredictable (e.g. at some point after the meeting they remember what you were saying and recommend your work to a third party);

- and unexpected and unpredictable (e.g. that third party then shares your findings and claims credit for themselves).

Your intervention will in reality engage with all three types of effect at the same time. Monitoring should be open to each of these, although it is usually the unpredictable effects that require the most attention from sense-making. The unexpected effects are trickiest to identify but often yield the richest learning.

By structuring informal sense-making and employing formal causal analysis you can deal with these effects and ensure the right kind of data is supplied at the right time.

Practices for informal sense-making

Informal sense-making happens all the time – we notice things, judge them, weigh them and assign value and significance to them. But it predominantly happens as a social process when interacting with colleagues or partners or struggling with a report.

Monitoring can help make informal sense-making more systematic and conscious, and better linked to decision-making. The following practical tips can help make the most of these moments.

Establish a common language

ROMA provides structure to sense-making by establishing monitoring priorities and signposting where to look for outcomes. The process of deciding key stakeholders to influence and developing the intended outcomes for each is extremely advantageous.

It provides a common language for a team to use when making observations and weighing up the importance of information – and to know what you should be making sense of. It also provides a schema on which to base conversations – even, at a very practical level, an agenda for a review meeting. To enable quick and responsive sense-making, it can be good practice to frame outcomes in terms of key stakeholders’ behaviour.

Example: consider stakeholder outcome measure 1 (see Table 2 in Realistic Outcomes) ‘attitudes of key stakeholders to get issues onto the agenda’. When using this measure for monitoring, you might create an indicator like ‘governing party officials have positive attitude to tackling [issue]’.

But how will you know their attitude has changed? A better indicator would describe what you would see if their attitudes were changing so when you see it you know it is significant. So you might use this instead: ‘governing party officials request evidence on [issue]’ or ‘governing party officials make speeches in favour of tackling [issue]’.

Another practical tip is to ask these three questions for each piece of information collected:

- Does this confirm our expectations?

- Does it challenge our assumptions?

- Is it a complete surprise?

These questions will quickly help you decide what to do with that information. If it confirms, then use it as evidence to strengthen your argument, but it may indicate your monitoring is too narrow and you need to consider broader views. If it challenges, then review your assumptions and strategies and consider if the intervention is still appropriate. If it is a surprise, then take time to consider its implication and whether the context is changing.

Draw on a variety of knowledge sources

To identify and understand unexpected effects, you need to be open to diverse sources of knowledge and not be constrained to a narrow view of what is happening. This means drawing on multiple sources of data but also diverse perspectives for making sense of these data.

This might mean creating spaces that bring in different perspectives, for example inviting ‘critical friends’ into reflection meetings, discussing the data with beneficiaries to seek their input or searching for studies in other fields that shed light on what is going on.

One particular approach that specifically seeks to do this is the Learning Lab developed by the Institute of Development Studies. The Learning Lab is a three-hour structured meeting with participants invited because of common knowledge interests rather than common experiences (this means the meeting is not confined to a project team as such). The meeting is structured around four questions: what do we know, what do we suspect, what knowledge and practice already exist and what do we not know or want to explore further?

An important aspect is a 20- to 30-minute silent reflection that allows all participants to think through what they already know or have heard or seen about the chosen topic. Participants are asked to draw on their practical experiences, including literature, discussions and observations.

Use visual artefacts

Visualising data can greatly improve our ability to spot patterns and form judgements. But it can also take a lot of time and effort to produce meaningful graphs from a stack of data. Dashboards can help with this by automatically combining data from multiple sources and displaying it in a predefined way. Dashboards can be real-time, although this requires custom software or strong programming skills; or they can be produced on demand, but this requires more time and effort.

ODI has developed a dashboard that can track all kinds of analytics in real time using a data aggregator called QlikView. The ODI Communications Dashboard brings together website and social media statistics, impact log entries, media hits and more in one visual report than can be filtered by output or programme.

A simpler alternative to a dashboard is a traffic light system to alert you to events that require attention. For example, you might use a stakeholder database to track information on voting patterns in parliament. A quick way to make sense of this information would be to assign a colour to each stakeholder depending on how they are voting (green = as expected, yellow = unexpected but probably doesn’t matter, red = unexpected and will affect our programme).

Relational data, such as who has been meeting with whom or who has shared your work with whom, can be visualised on a network map. This will allow for filtering and clustering of relationships to uncover patterns and understand the dynamics of policy communities.

Practices for formal casual analysis

Compare with the theory: process training

One of the most plausible ways to understand causes in complex contexts is to compare observations with a postulated theory. This section describes steps for developing a theory of change. For example, our theory says if we train junior parliamentary researchers to interpret and use scientific evidence they will use these skills to better advise parliamentary committees, which in turn will draft more appropriate and effective bills.

In this example, each stage can be tested by comparing the data on what the researchers did after their training, and the subsequent decisions of the committees they are working with, with the effect expected. It is then possible to confirm or rule out particular causal claims.

This is the basis of process tracing, a qualitative research approach used to investigate causal inference. Process tracing focuses on one or a small number of outcomes (possibly involving a process of prioritisation to choose the important ones) to verify they have been realised (e.g. a policy-maker makes a decision in line with recommendations).

It then applies a number of methods to unpack the steps by which the intervention may have influenced the outcome. It uses clues or ‘causal process observations’ to weigh up possible alternative explanations. There are four ways clues can be tested:

‘Straw-in-the-wind’ tests: when a straw seems to be moving, it lends weight to the hypothesis that there is wind but it does not definitively rule it in or out (e.g. we sent our report to the policy-maker but do not know if it got to them).

'Hoop' test: a hypothesis is ruled out if it fails a test (e.g. was the report sent to them before the decision was made?)

‘Smoking gun’ test: seeing a smoking gun lends credence to the hypothesis that it was used in a crime but is not definitive by itself (e.g. we see our report on the desk of the policy-maker but don’t know if they have read it).

‘Double decisive’ test: where the clue is both necessary and sufficient to support the hypothesis (e.g. we observe precisely the same language in the decision of the policy-maker as in our recommendations).

Check timing of outcomes

A strategy that can help determine causal inference is laying out all the outcomes in a timeline to demonstrate the chronology of events. If you also place the intervention activities and outputs on the timeline then you can begin to establish causal linkages, visually applying the test that effect has to follow cause.

This can eliminate many competing claims about causal inference and help narrow down the important ones. There may also be timings inherent in the theory of change, so this can also be used to judge the plausibility of contribution.

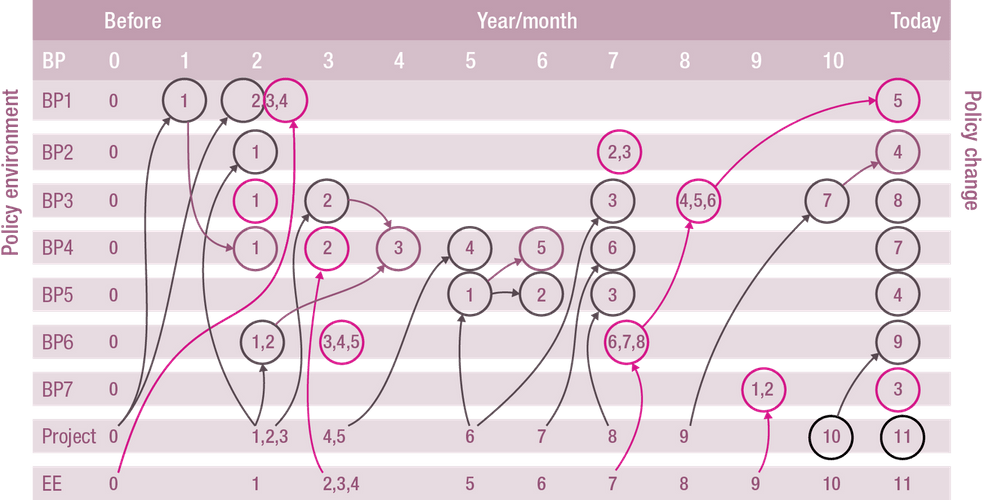

The RAPID Outcome Assessment approach has been used to determine the contribution of research to policy change. In RAPID Outcome Assessment, a timeline is mapped of milestone changes among pre-determined target stakeholders alongside project activities and other significant events in the context of the intervention.

A workshop is convened with people close to the intervention and the changes described. Participants work through each of the changes observed and use their knowledge and experience to propose the factors that influenced them (which could be the intervention or other factors) and draw lines between the different elements of the timeline.

Figure 8 is a timeline developed to analyse the Smallholder Dairy Project in Kenya, a research and development project that aimed to use findings to influence policy-makers. The analysis, based on the ROA approach, identified the key actors setting and influencing policies affecting the dairy sector in Kenya.

It then used interviews to find significant behavioural changes in these actors, triangulating them in a stakeholder workshop. This resulted in a set of linkages between the changes and key project milestones as well as external events.

Figure 8: Example of timeline showing changes observed in seven key stakeholders (BP1-7), project milestones and external environment (EE) for Smallholder Dairy Project, Kenya (from ODI, 2012)

Investigate possible alternative explanations

All the approaches described above share a commonality: they all look beyond the intervention for possible contributing factors. It is fairly obvious that, when working in open systems, we are rarely the sole actor trying to influence outcomes. It is vital, then, that whatever contribution is made is placed in the context of all the other actors and factors operating in the same space.

Investigating alternative explanations can help gauge the relative importance of the intervention, but can also help narrow down hypotheses to test if alternatives can be ruled out – which can strengthen the case for intervention contribution.

This is the basis of most theory-based evaluation approaches. It is the core purpose of the General Elimination Methodology developed by Michael Scriven, which systematically identifies and tests ‘lists of possible causes’ for an observed result of interest. As well as collecting data about the intervention, the study collects data about other possible influences so as to either confirm or rule them out.

The General Elimination Methodology was used in an evaluation of a public education campaign to end the juvenile death penalty in the US. The campaign, funded with $2 million from a collaboration of foundations, ran for nine months from 2004 to 2005 during a US Supreme Court hearing to review a number of cases of juvenile offenders facing the death penalty.

On 1 March 2005, the Supreme Court ruled that juvenile death penalties were unconstitutional. The evaluation sought to determine to what extent the campaign influenced this decision.

Following the General Elimination Methodology, the evaluation started with two primary alternative explanations: 1) that Supreme Court justices make their decisions entirely on the basis of the law and their prior dispositions rather than being influenced by external influences; and 2) that external influences other than the final push campaign had more impact.

The evaluation gathered evidence through 45 interviews, detailed review of hundreds of court arguments and decisions and legal briefs, analysis of more than 20 scholarly publications and books about the Supreme Court, news analysis, reports and documents describing related cases, legislative activity and policy issues and the documentation of the campaign itself, including three binders of media clips from campaign files.

Through all this evidence the evaluators were able to eliminate sufficiently and systematically the alternative explanations to arrive at an evidence-based, independent and reasonable judgement that the campaign did indeed have a significant influence on the Supreme Court decision.

Summary

This section started out with the aim of providing practical advice for people working to influence policy to build reflective and evaluative practice into their work to support decision-making and demonstrate progress.

What to monitor introduced nine ‘learning purposes’ – the overarching reasons for undertaking any kind of M&E activity that should drive the design and use of M&E. It proposed 35 individual measures for policy-influencing interventions across six categories (strategy, management, outputs, uptake, outcomes and context), and suggested how these could be used for the learning purposes.

How to monitor discussed how data could be collected both in real time, as the intervention is being carried out, and in retrospect, through detailed studies.

Finally, Making sense turned to the important task of making sense of those data and putting them to use in decision-making and demonstrating impact.

Since the theme of the section has been evaluative practice, it is apt to conclude with a few final pointers on good practice:

- Put use at the heart of your monitoring, evaluation and learning to make sure any enquiry will have a positive contribution.

- Be grounded in theory from the beginning and test each stage as you go.

- Consider competing theories so as not to close down unintended effects.

- Embrace failure as just as good an opportunity to learn from as success.

- Invest in your monitoring and learning in proportion to the scale of your intervention: sometimes it is appropriate to use simple measures.

- Be conscious of rhythms and spaces in which learning occurs: it happens at different paces and different levels.

Finally, there is a traditional African proverb that encapsulates the attitude to take when developing M&E systems for policy influence: ‘we make our path by walking it’. Start by looking at what people are already doing, where data are already collected and the spaces that already exist for sense-making, and then work to strengthen and support those.

If existing patterns are ignored, efforts may be wasted because people will always drift towards the familiar and the easy.